How to Write Prompts That Don't Drift

- Prompt drift is not random model behavior; it is a predictable decay of attention over long contexts.

- Reiterating instructions louder does not work. State management and precise re-anchoring are the only reliable solutions.

- Hard constraints like schema enforcement beat soft guidance when maintaining structure across thousands of lines.

Prompt drift is not a bug. It is the predictable decay of mathematical constraint over an extended context window.

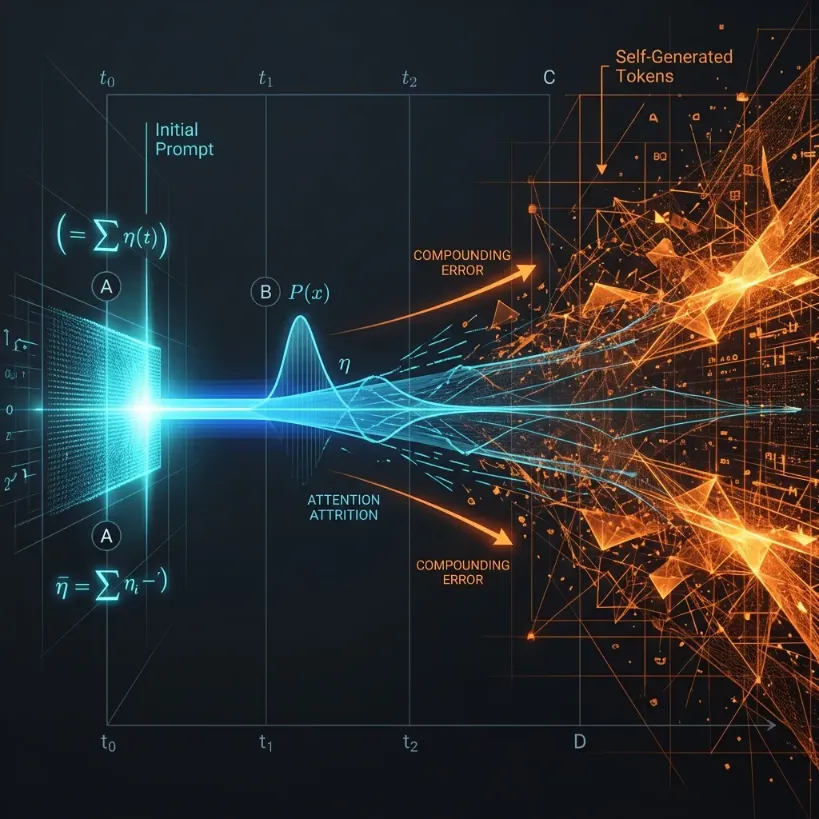

You give an LLM a precise, 400-word instruction. The first 50 tokens of output are exactly what you asked for. By , the formatting gets sloppy. By , the model has completely forgotten the persona, dropped your negative constraints, and is hallucinating generic filler. You didn’t do anything wrong. The physics of the attention mechanism just took over.

Every token the model generates dilutes the probabilistic weight of your initial instructions. To keep an AI on track from line 1 to line 10,000, you have to stop treating your prompt as a static command and start treating it as a state management system.

The Mechanics of Attention Attrition

LLMs generate text autoregressively. When predicting token , the model attends to all prior tokens. As the output grows, the absolute distance between your initial prompt and the current generation point increases.

More importantly, the proportion of the context window occupied by the model’s own generated text begins to dwarf the space occupied by your instructions.

Self-reinforcement logic takes hold. The model starts attending primarily to its most recent output rather than your initial constraints. If a slight style deviation occurs at , that deviation becomes part of the prompt for . The error compounds.

Author’s Comments: The “Reiteration” Fallacy

I continually see engineers try to fix drift by making the initial prompt louder. They use ALL CAPS, add redundant warnings, or threaten the model with penalties. This fundamentally misses how attention works. You cannot pre-load enough weight at to permanently override the gravitational pull of 8,000 newly generated tokens.

In quantitative finance, when we built credit risk models (like KMV) at Morgan Stanley, we never allowed an iterative differential equation to run unanchored for thousands of steps—compounding error inevitably blows up the distribution. LLM generation is exactly the same underlying math. The fix is structural re-anchoring, not emphatic shouting.

Architecture for Long-Context Stability

To prevent drift, you must engineer mechanisms that force the model to continuously re-anchor itself to the core constraints.

Periodic State Refreshers

If you need a 10,000-line output, do not ask for it in a single generation step. Break the task into discrete chunks.

Send the output of Chunk 1 back to the model as context for Chunk 2, but re-inject the core constraints at the bottom of the new prompt. This guarantees the distance between the generation point and the rule set remains effectively short.

Hard Projections Over Soft Instructions

If your output requires a strict structure, stop asking the model nicely in unstructured English. Use schema enforcement.

A soft constraint looks like: “Always return the data as a list of dictionaries.” A hard projection enforces JSON mode or uses grammar-constrained decoding at the API level.

Hard projections operate beneath the prompt layer. They force the probability mass of non-compliant tokens to zero. Tooling for this is now standard: use OpenAI’s Structured Outputs for API-level schema enforcement, or open-source frameworks like Outlines and Guidance for mathematically guaranteed generation paths. When you are operating at scale, probability is the only guarantee you have.

If you need to test constraint architecture without racking up API costs, use a local Prompt Scaffold. It isolates your system rules from your task data before you start paying for generation. Validating the baseline structure locally prevents expensive structural failures from propagating deep into a long-context run.

The “Token Buffer” Strategy

When generating long-form reasoning, models lose track of their objective if the reasoning chain becomes too convoluted.

Require the model to output a state summary token block every few hundred lines. Force it to print out exactly what phase of the problem it is currently solving, and what the immediate next step must be.

<current_state>

<completed_phase>Data extraction from source document</completed_phase>

<active_constraints>JSON format only, no passive voice, max 500 words</active_constraints>

<next_step>Synthesize extracted entities into target schema</next_step>

</current_state>(Note: These explicit XML tags serve a dual purpose. They act as an attention anchor for the LLM, and they provide structured markers for your downstream parsers to safely monitor task progress and trigger programmatic alerts if the state goes off-rail.)

This aligns directly with the mechanics discussed in Chain-of-Thought Prompting Explained. By writing its current state into the context, the model creates a fresh, localized anchor. The attention mechanism now has a highly relevant, mathematically dense summary located just a few tokens away from the generation point.

Case Study: Drift in Action

Consider a prompt tasked with summarizing 50 legal cases sequentially, maintaining a formal tone and strict bulleted format.

The Naive Approach (Fails by case 12): A single prompt containing all 50 cases and the rule “Use a formal tone and output exactly 3 bullet points per case.” By case 12, the model drops the formality. By case 20, the bullet points become numbered lists. By case 40, it merges distinct cases together. The prompt’s probabilistic weight was simply overwhelmed by the tokens generated for the first 11 cases.

The State-Managed Approach (Holds through case 50): The pipeline processes 5 cases per API call. At the end of each generation chunk, the prompt forces the model to output a strictly structured state tracker before continuing:

<current_state>

<progress>Cases 1-5 completed. 45 cases remaining.</progress>

<active_constraints>

- Output exactly 3 bullet points per case

- Tone: Formal legal analysis

</active_constraints>

<next_action>Ready to process cases 6-10</next_action>

</current_state>The state is dynamically refreshed. The attention mechanism never gets far enough away from the core rule set to forget it.

Practical Pitfall Avoidance Guide

- Translate negative constraints to positive rules. LLMs spend significant probabilistic effort processing “not” or “never”. A negative rule creates a flat distribution; a positive rule concentrates it.

| Weak Constraint (Drifts) | Hard Constraint (Anchors) |

|---|---|

| Do not write long sentences. | Limit all sentences to under 20 words. |

| Do not use marketing jargon. | Use only grade-8 level vocabulary. |

- Place remaining negative constraints at the end. If you must use a rule like “Never use the word ‘ensure’”, put it physically at the very end of your prompt. Recency bias dictates that the most proximal tokens exert the highest influence on immediate generation.

- Limit the context window artificially. Just because you have a 128k context window doesn’t mean you should use it for generation. Providing 100k tokens of background material flattens the probability distribution. Extract only what you need first, then generate.

- Track the exact token cost. Long-context failure loops get expensive fast. Before running a multi-step generation pipeline across large documents, benchmark the expected token usage with an LLM Cost Calculator. Chunking your pipeline not only prevents drift, but enables “checkpointing”—if generation fails halfway, you resume from the last successful chunk rather than starting over and re-paying for the entire 128k context. Optimize chunk sizes to fit both the attention span and the budget.

The Anti-Drift Checklist

Do not launch a long-context task without verifying these three structural properties:

- Architectural Isolation: Is the task broken into discrete generation chunks rather than a single massive output?

- State Anchoring: Is the model forced to write a

<current_state>block every few hundred tokens to reset its attention focus? - Hard Constraint Enforcement: Are formatting rules enforced via Structured Outputs or grammar engines rather than polite English requests?

Precision at length is not a matter of model size. It is a matter of strict constraint management across the entire generation lifecycle.

Support Applied AI Hub

I spend a lot of time researching and writing these deep dives to keep them high-quality. If you found this insight helpful, consider buying me a coffee! It keeps the research going. Cheers!